Benefits of Space Framework Metrics

The SPACE framework is a strategic planning tool that helps organizations examine and improve their capabilities. The framework consists of five components: strategy, processes, architecture, culture, and environment. The following are some of the advantages of adopting the SPACE framework:

The SPACE framework provides a comprehensive approach to strategic planning, taking into account not just the organization's strategy but also its procedures, architecture, culture, and environment. This ensures that all components of the organization are taken into account throughout the planning process.

- Alignment: The SPACE framework aids in ensuring that the organization's strategy and capabilities are in sync. The framework may assist guarantee that the organization's capabilities are aligned with its strategic objectives by reviewing the organization's present capabilities and finding opportunities for development.

- Focus on capabilities: Rather than only developing a strategic plan, the SPACE framework focuses on the development of organizational capabilities. This helps to guarantee that the organization has the essential competences to properly execute its plan.

- Flexibility: The SPACE framework is adaptable to the individual demands of an organization. This enables organizations to modify the framework to their own needs and difficulties.

- Integration: To give a more thorough strategic planning process, the SPACE framework may be combined with other strategic planning tools and frameworks like as SWOT analysis and Porter's Five Forces.

Overall, the SPACE framework may assist organizations in developing a more effective and complete strategic plan that is linked with their capabilities and focuses on strengthening organizational strengths.

How to use the SPACE Framework?

Let us understand the step by step process that organizations can adopt on how to use SPACE framework:

Step 1. Identify the scope and goal of the analysis:

It is critical to identify the scope and purpose of the analysis before commencing the analysis. This will help to ensure that the analysis is targeted and relevant to the goals of the organization.

Step 2. Determine the major components of the SPACE framework:

SPACE is made up of five components: Strategy, Processes, Architecture, Culture, and Environment. Before commencing the study, it is critical to grasp these aspects and their interdependence.

Step 3. Assess the organization's present capabilities:

The next stage is to evaluate the organization's current capabilities in each of the SPACE framework's five aspects. This may be accomplished through a variety of means, including interviews, surveys, and data analysis.

Step 4. Identify opportunities for improvement:

After assessing the organization's present capabilities, the following stage is to identify areas for improvement. This can be accomplished by comparing the organization's present capabilities to best practices in the sector or by detecting gaps between the organization's capabilities and its strategic objectives.

Step 5. Create a plan of action:

The final stage is to create a plan of action to address the areas for improvement highlighted in the previous phase. Specific activities, deadlines, and accountable parties for each project should be included in the action plan.

Guidelines for Using the SPACE Framework

The SPACE framework provides teams with an avenue to measuring, studying, and understanding developer productivity. However, it is imperative that users bear in mind that these metrics should be tailored to their team’s unique needs and circumstances.

In that light, here are some broad guidelines for managers, leaders, and developers to find success while using the SPACE framework:

- Metrics should always exist within a constellation of other metrics in order to help paint a picture of dev team productivity. Managers and leaders should capture several metrics across multiple dimensions of the framework to ensure a well-rounded viewpoint into dev team activity. In addition to metrics, managers and leaders should try to complement quantitative data with perceptual metrics and qualitative data from surveys, feedback forms, 1:1 meetings, and other conversations to get a full and complete understanding of their dev team’s reality. Such a complete picture can enable leaders to make smart decisions.

- Leaders and managers should stay mindful of their biases - both conscious and subconscious when approaching data and insights.

- Respecting employee privacy should be the team’s paramount default. Ensure that all metrics are measured and optimized at the team level. Every metric, when optimized for a team, eventually trickles down to impact individuals and hence focus on productivity and performance of the team as a whole.

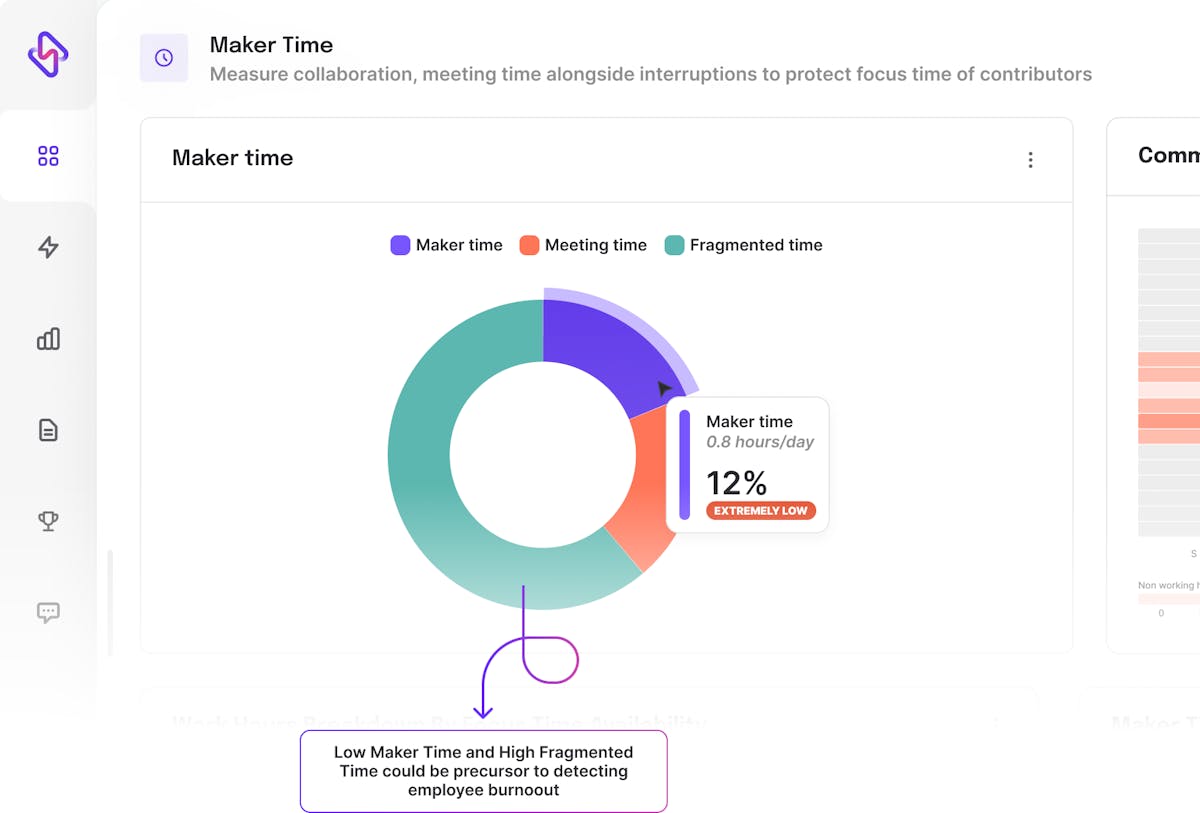

- Chasing productivity can become a futile effort if it does not provide sustainable productivity. In order to sustain the performance of a team, leaders have to prioritize developer experience and well being. It is time that organizations and teams structure employee-centric tooling, processes, and workflows to allow great employee experience to translate into great products.

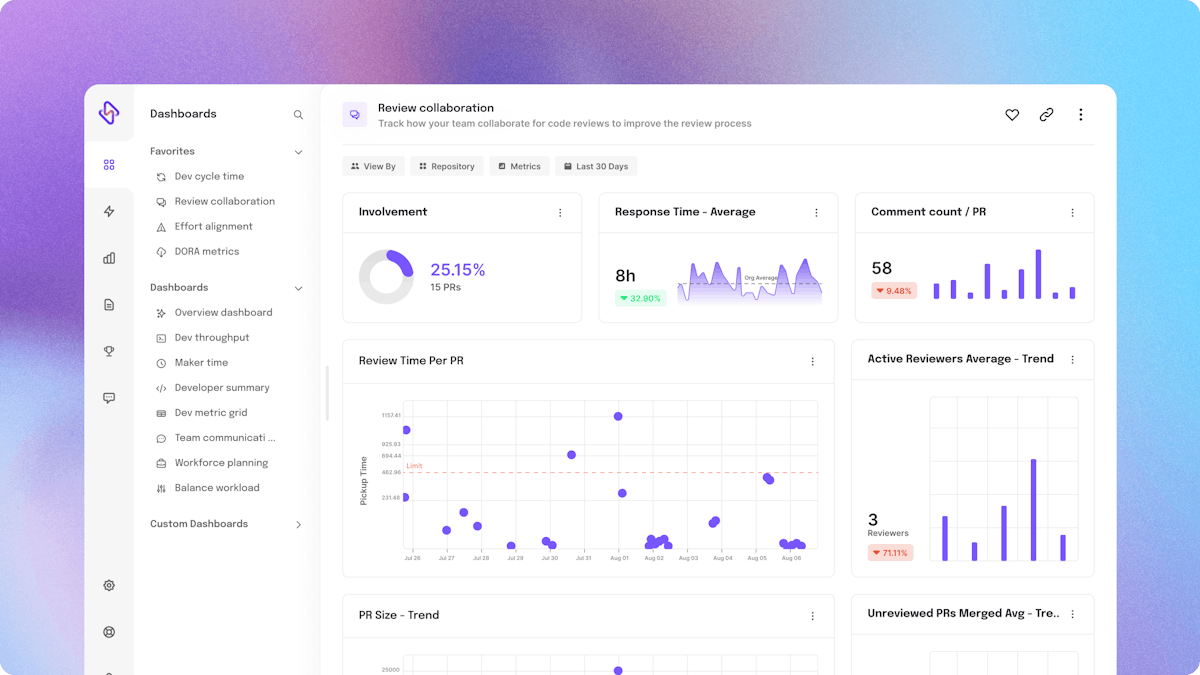

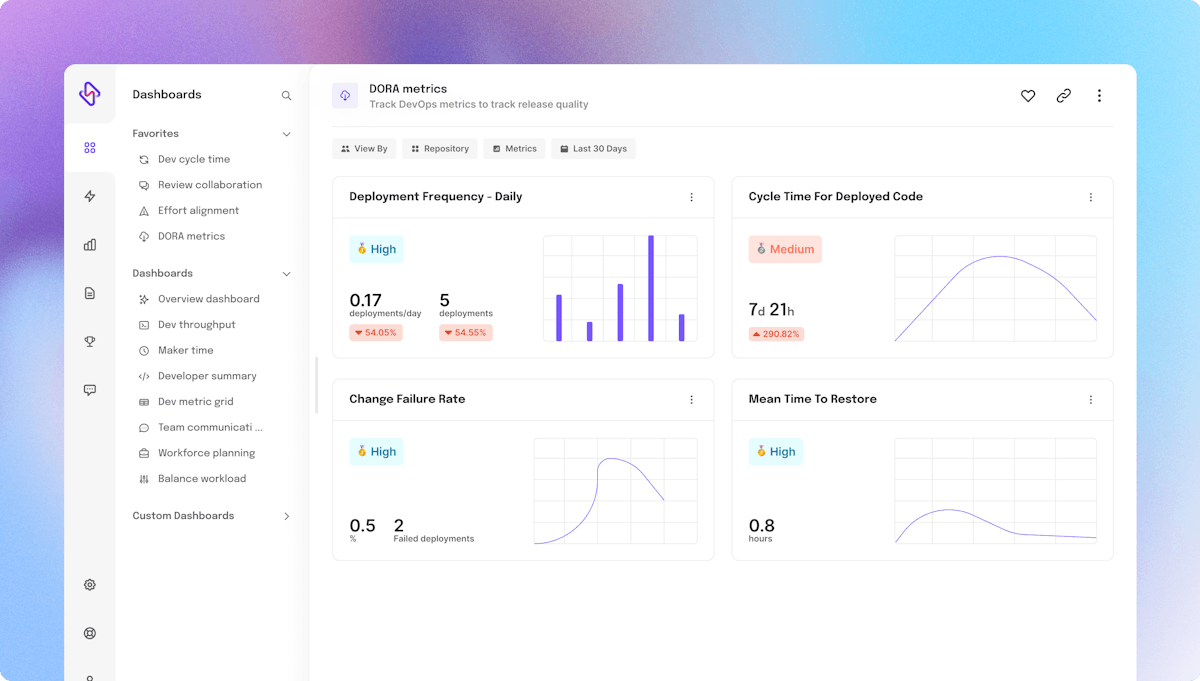

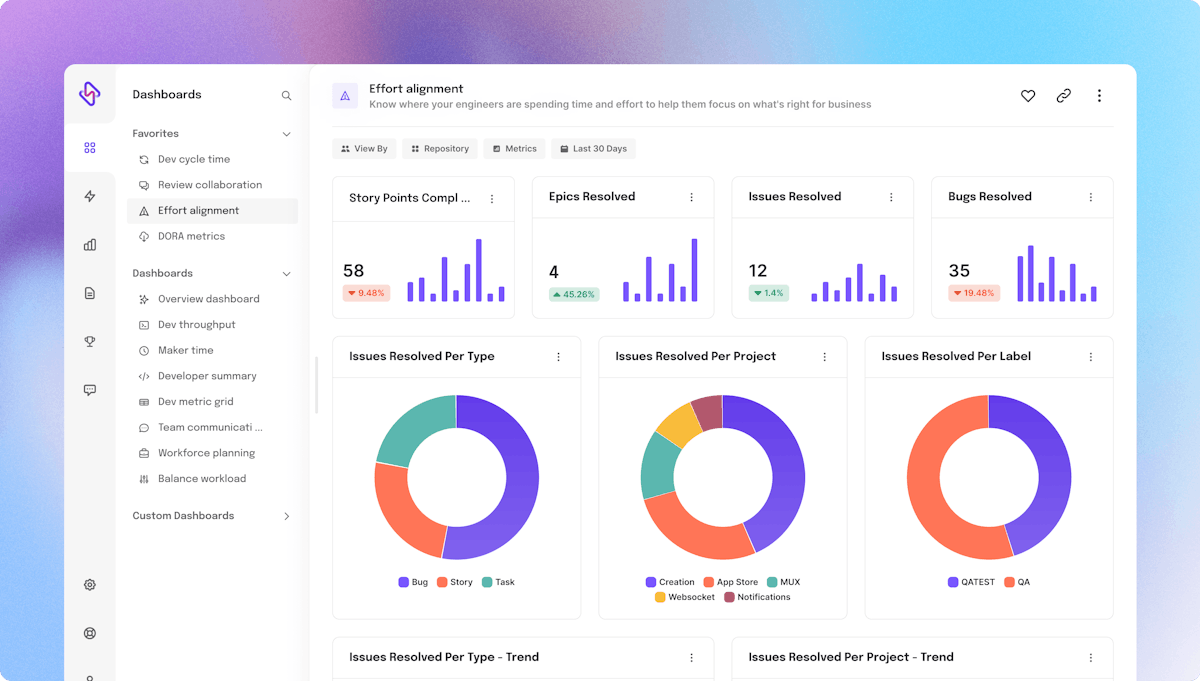

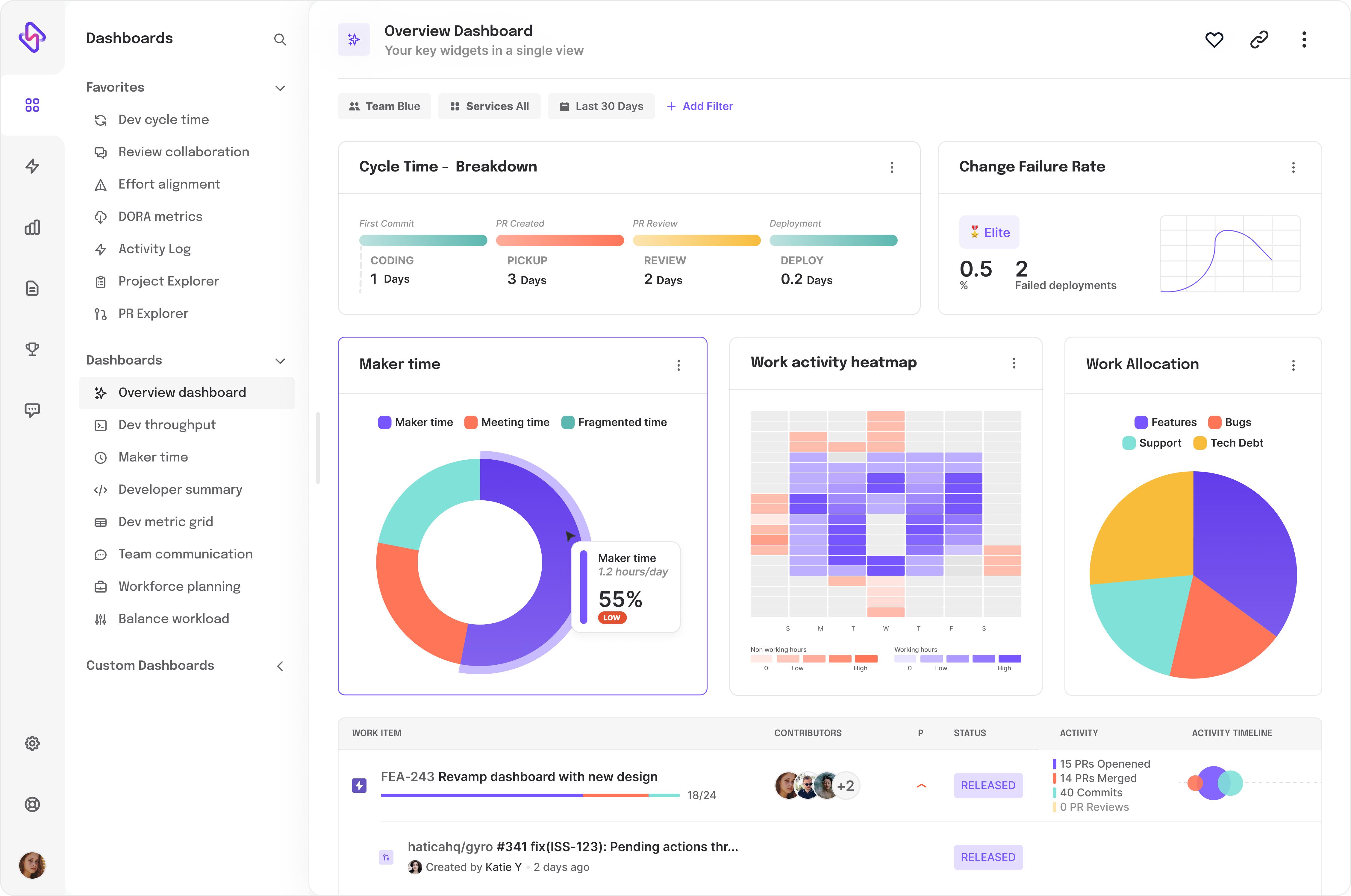

💡 Hatica is an engineering analytics platform that helps development teams build sustainable productivity and ensure optimum employee experience by tracking several SPACE metrics like cycle time, DORA metrics, focus and meeting time and more. Request a demo →

FAQs

1. How can organizations adjust their approach to measuring developer productivity to incorporate the SPACE framework?

Organizations can incorporate the SPACE framework by understanding the framework, identifying relevant metrics, assessing current measurement methods, aligning metrics with the SPACE framework, and regularly reviewing and adjusting the measurement approach as needed.

2. In what ways do SPACE metrics and DORA metrics differ in their scope of measurement?

SPACE metrics and DORA metrics are two different approaches to measuring organizational capabilities. The SPACE framework assesses an organization's capabilities across five elements (Strategy, Processes, Architecture, Culture, and Environment), while DORA metrics focus specifically on DevOps capabilities such as deployment frequency, lead time for changes, and time to restore service. Both frameworks can be valuable for assessing and improving organizational capabilities, but they have different areas of focus.

3. Why do organizations use SPACE metrics to understand Developers Productivity?

SPACE metrics provides a comprehensive method to assess organizational capabilities that can be applied to numerous areas, including software development, organizations utilize SPACE measurements to evaluate developer productivity. By evaluating a company's capabilities across five dimensions (Strategy, Processes, Architecture, Culture, and Environment).